ABSTRACT

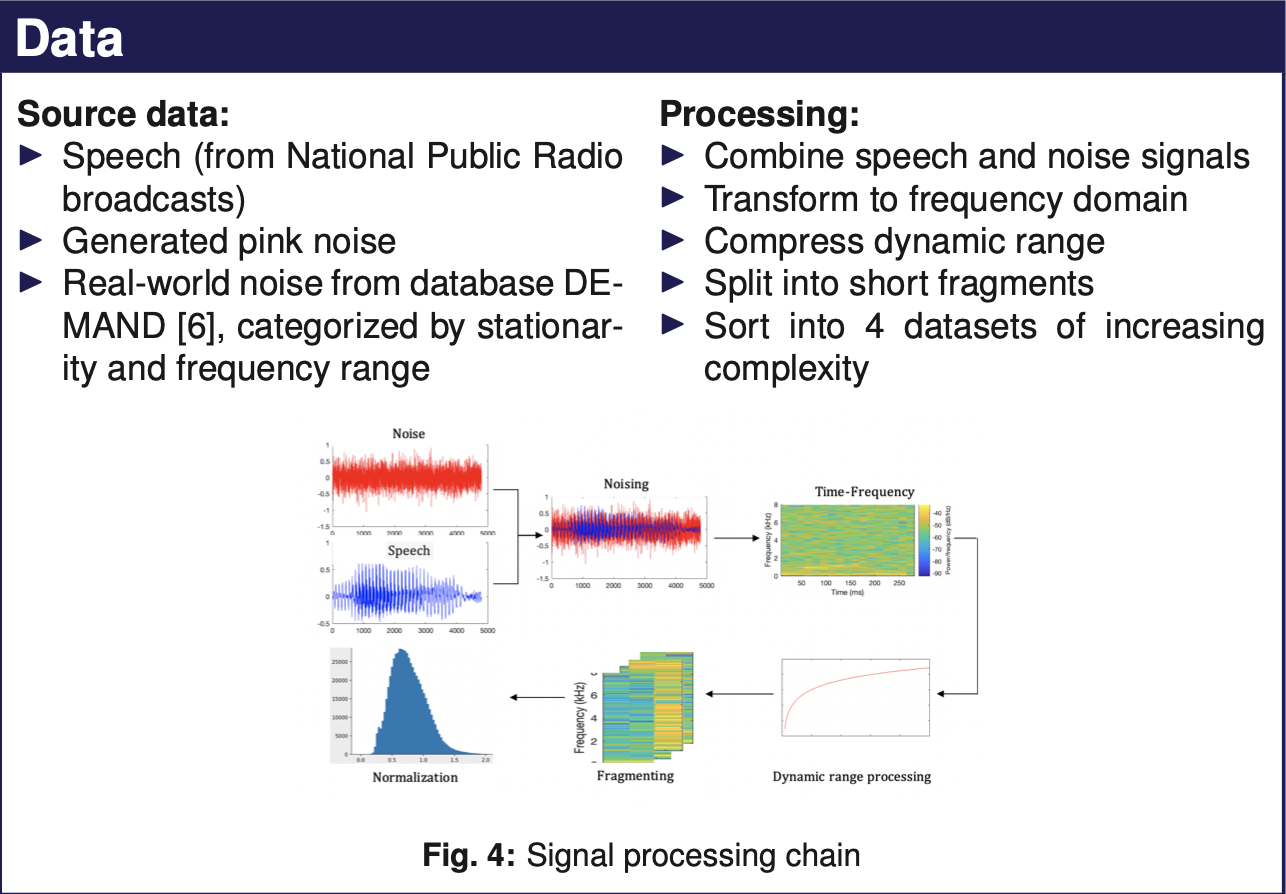

The aim of this work is to implement a single channel speech enhancement algorithm using deep neural networks. Several models are devised and inspected, whose architectures are inspired from state-of-the-art models such as fully-convolutional autoencoders and convolutional-recurrent network. The experimental adoption of Gated Recurrent Units (GRUs) and novel Temporal Convolutional Net- works (TCNs) instead of Long-Short Term Memory (LSTM) layers is presented and discussed. The models are trained using several tiers of datasets, composed of speech data from news broadcasts mixed with pink noise and different real-world noises. Different data representations are tested, all of them based on spectrogram. The performance of each model is evaluated in terms of speech quality and intelligibility using Blind Source Evaluation, Perceptual Evaluation of Speech Quality (PESQ) and Short-Term-Objective- Intelligibility (STOI) metrics. The results are compared to those of a state- of-the-art baseline noise reduction system and relevant findings are exposed and discussed.